Summary

We show that the generative dynamics of diffusion models exhibit a spontaneous symmetry-breaking phenomenon, resulting in two distinct generative phases:

- Phase 1: A linear steady-state dynamics centered around a fixed point.

- Phase 2: An attractor dynamics that guides the model towards the data manifold.

The period of instability during this transition contributes to the diversity of generated samples.

We propose a Gaussian late initialization scheme that enhances model performance, resulting in up to a 3x FID improvement on fast samplers. This improvement is achieved by recognizing that the early phase does not significantly contribute to the model's performance.

Abstract

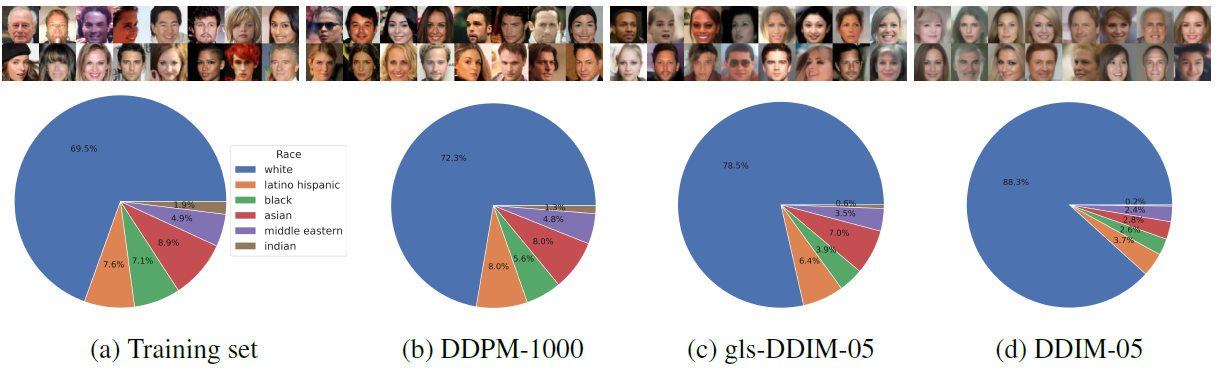

Generative diffusion models have recently emerged as a leading approach for generating high-dimensional data. In this paper, we show that the dynamics of these models exhibit a spontaneous symmetry breaking that divides the generative dynamics into two distinct phases: 1) A linear steady-state dynamics around a central fixed-point and 2) an attractor dynamics directed towards the data manifold. These two "phases'' are separated by the change in stability of the central fixed-point, with the resulting window of instability being responsible for the diversity of the generated samples. Using both theoretical and empirical evidence, we show that an accurate simulation of the early dynamics does not significantly contribute to the final generation, since early fluctuations are reverted to the central fixed point. To leverage this insight, we propose a Gaussian late initialization scheme, which significantly improves model performance, achieving up to 3x FID improvements on fast samplers, while also increasing sample diversity (e.g., racial composition of generated CelebA images). Our work offers a new way to understand the generative dynamics of diffusion models that has the potential to bring about higher performance and less biased fast-samplers.

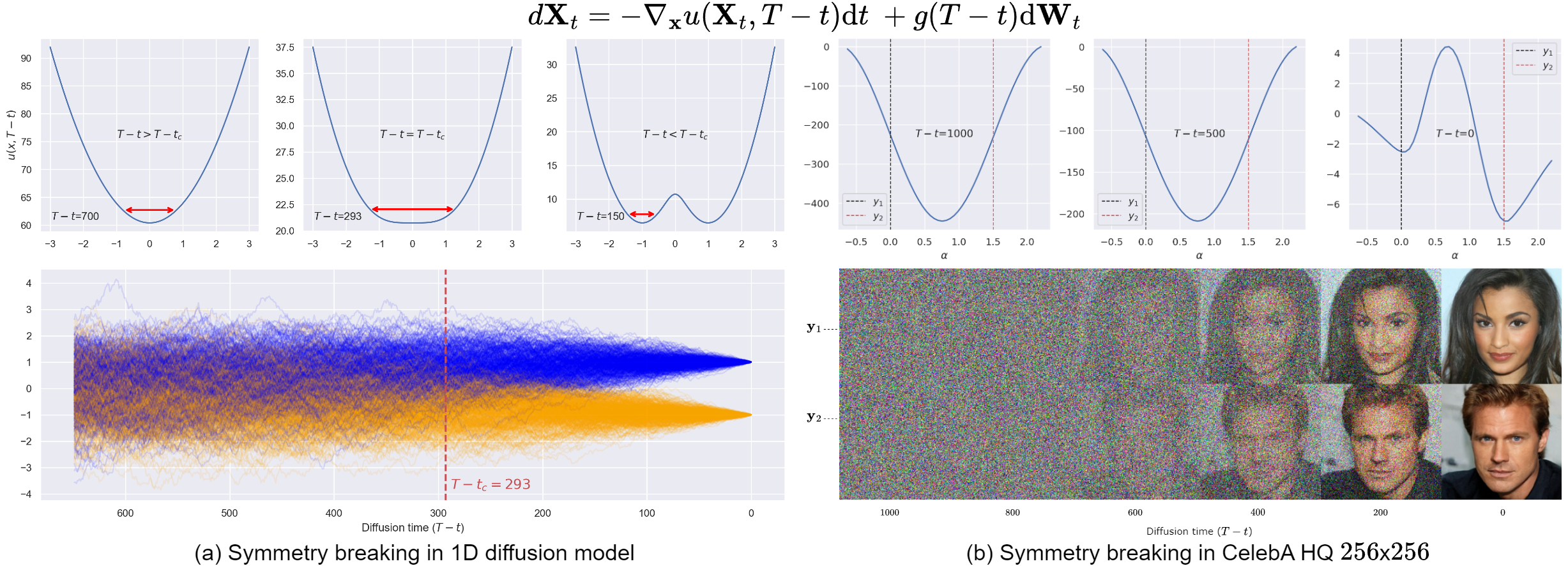

Spontaneous symmetry breaking in one-dimensional diffusion models

To illustrate spontaneous symmetry breaking in diffusion models, we begin with a one-dimensional example. We use a dataset consisting of only two points: -1 and 1, each with an equal probability of selection (See Figure 1a). For the purpose of our analysis, we re-express the generative dynamics of the diffusion model in terms of a potential energy function \(u(x, t)\). The corresponding potential equation for the VP-SDE (DDPM), ignoring constant terms, is the following:

We can now analyze how the potential \(u(x, t)\) evolves over time as we change the parameter \(\theta\). A symmetry-breaking event is marked by a notable change in the shape of the potential well. For instance, after reaching a critical value \(\theta_c\), the potential splits, indicating a shift in the dynamics. Prior to the critical point, the system exhibits mean-reversion towards a stable fixed-point located at the origin \(x=0\) (See the figure below on the right, indicated by the green line.). However, once the symmetry breaking occurs, this stable point becomes unstable (dashed green line.), giving rise to the emergence of two new stable points (shown in blue and orange). These points act as attractors, guiding the system towards the data manifold. By exploring the potential below, you can observe the expected change in the shape of the potential well at the critical value \(\theta_c\), as described theoretically in our paper. In the left graph, you can manipulate the potential well, while the right graph shows the corresponding solution for \(x\). The vertical brown dashed line represents the position of the parameter \(\theta_c\). The picture on the right depicts a pitchfork bifurcation, which captures a qualitative change in the behavior of the dynamical system as a parameter is varied, in this case \(\theta\).

Experience symmetry breaking in diffusion models! Play with the potential and witness the magic!

In summary, we are studying how the shape of the potential changes as we change \(\theta\). A critical point marks a shift in the potential, leading to the emergence of new stable points that guide the system towards specific patterns. You can explore these ideas by manipulating the potential graph and observing the corresponding changes in the system's behavior.

Spontaneous symmetry breaking in trained diffusion models

Late start initialization

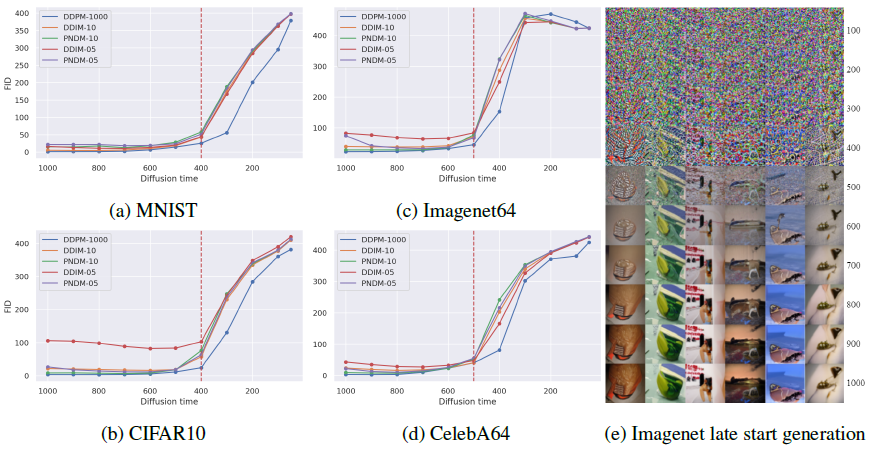

In trained diffusion models, this phenomenon can be observed by analyzing the generative performance as a function of time. We discovered that the performance remains largely unchanged and then rapidly deteriorates after reaching a ‘critical time’.

Boost performance on fast samplers

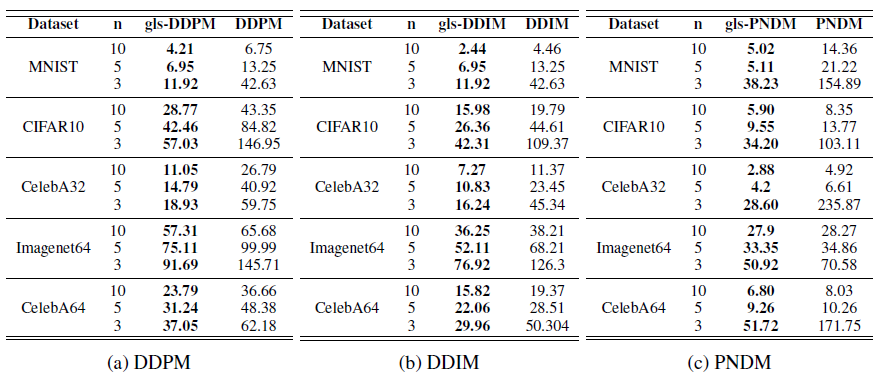

In practice, we can initiate the diffusion process just before the critical time, as the distribution remains close to a multivariate Gaussian distribution. To leverage this, we propose a Gaussian initialization scheme called "Gaussian late start" (gls). It involves estimating the mean and covariance matrix of the noise-corrupted dataset at the initialization time and using the resulting Gaussian distribution as the starting point of sample generation. This addresses the distributional mismatch issue that arises from a late start initialization. Tables 1a, 1b, and 1c present results for stochastic DDPM and deterministic dynamics using the DDIM and PNDM samplers, demonstrating the performance boost achieved by the Gaussian late start approach in the vanilla samplers.

The Striking Performance Boost

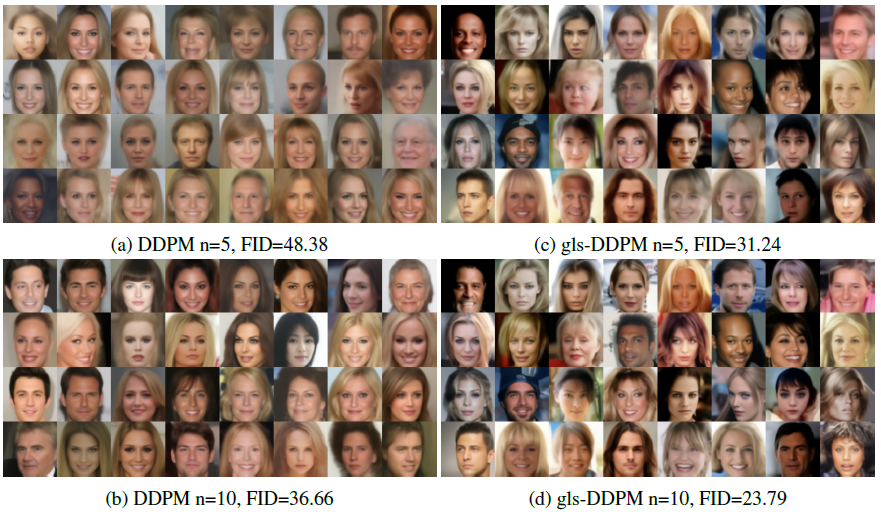

The performance boost is striking in some datasets, with a 2x increase in CelebA 32 for 10 denoising steps and a 3x increase for 5 denoising steps. As evidenced by the results showcased in Figure 3.

Diversity analysis

Our analysis suggests that to achieve diverse and high-quality samples, it is crucial to sample within a specific time window around the critical time. This is because small changes during this window are amplified by the system’s instability and have a significant impact on the final samples. In light of this, our Gaussian late initialization method improves sample diversity compared to the standard fast-sampler (see Figure 4.).

Citation

@article{raya2023spontaneous,

title={Spontaneous symmetry breaking in generative diffusion models},

author={Gabriel Raya and Luca Ambrogioni},

year={2023},

journal={arXiv preprint arxiv:2305.19693}

}